AI is ASS

Back in 2015 I wrote a book for TROM about humans and the machines they have created. It is called the Human Machine and you can read it here.

Back then it was one of the first hypes about A.I. And as you may know by now, AI stands for Artificial Intelligence. Back then Google and Apple started to promote their AIs: Google Now and Siri. You could talk to these bots and they will provide you a lot of answers from many different domains. Ask how many dogs are in the world, ask them to set up an alarm for you, how’s the weather, you name it. It would integrate with a lot of apps and devices.

People were asking these bots existential questions like what is the purpose of life:

I remember the Google supercomputers generating these weird images and the news outlets calling them “machines dreaming”. Meaning, how machines dream:

And that Pepper robot that you could talk to…

These came after a few years of another hype: the self driving cars that were about to revolutionize transportation. In the book Automated Autonomous World that I wrote for TROM in 2013 , I partially fell for that hype and wrote in a very non-skeptical way about them.

To be fair with my past-self, I was focused entirely on how in a saner society the tech-part of the world could look like. So I presented many prototypes and unproven tech. I talked about how we have to change the infrastructure for these to work, and so forth. However, what I did not realized as deeply, was how misleading the world is. You see I naively thought that the big tech companies may not exaggerate as much about their tech. I sourced their own claims from their own engineers. But 10 years later I could say for sure that the vast majority of the tech I showcased in that book, was BS. For the most part at least. That means, the words that came out of the mouths of these engineers and “specialists” that work for these big companies, were mostly crap. Marketing.

That book may be removed from TROM when we do a cleanup this month in preparation for the upcoming TROM II documentary.

Back to AI.

In 2016 I started to be more and more aware of how the tech world is full of bullshit and bullshitters. For that reason I chose to present tech that was already there, working, and when it was just in testing phases I would present it as such and link to scientific papers about that tech.

When it came to AI I already smelled the bullshit. The stupid fear that AI will take over the world, and all of that crap. But I said let’s educate myself. And so I said the best way is to understand a bit how these things work. I took several courses and read a bunch of stuff. I learned how AIs can categorize objects:

How it interprets text and speech:

I learned how facial recognition works:

I looked at how AIs learn to play games, drive cars, do science research.

Of course I only scratched the surface of these since they are complex. But I got a solid understanding I think. I realized that these are Statistical Software. Added with a bunch of filters and rules. They are complex, cool, interesting, but nothing WOW in terms of how they work. I learned that data and computer power is what makes the difference. I realized how non-magical these AI look when you have a clue about how they work. The end result is mesmerizing only for those who have a very dim understanding of these pieces of software.

I then talked about how they compared to humans. And of course the conclusion was that AI is just a complex set of statistical software with filters and rules and a lot of data, and has nothing to do with either artificial or intelligence (whatever that means).

Of course there are some applications for these pieces of software that I touched upon in the book, but mainly it was a grotesque hype.

Anthropomorphizing

That’s a new word that I learned while writhing this book. It means “to ascribe human form or attributes to (an animal, plant, material object, etc.).”. That’s what AI are mostly.

The present.

7 years later and the AI “revolution” has resurfaced again. This time it seems even more mesmerizing, although not so much for me. I fee like I’ve seen this before…

ChatGPT and the like, Stable Diffusion, Dolly, now they have different names and do similar things. They are fun to play with. Can create some weird images out of text, something I was showcasing in 2016 in another book about Language.

And the craze is starting again with people thinking these AIs will revolutionize the world, or will take over, and then no more jobs, no more artists, no more life. If you look again at how these work, they seem a lot less mesmerizing. The companies that push them use anthropomorphism to make them sound like they “think” and are like humans: neural nets, learning, hallucinating, and so forth. In fact they are still the same statistical machines that produce probabilities and that results in interesting outcomes.

Without going into specifics I recommend this funny video that explains the entire situation quite well: A.I. is B.S. ->>> https://ytb.trom.tf/watch?v=ro130m-f_yk

Also read through the sources of that video.

When I asked chatgpt about TROM I got this:

Which is not bad.

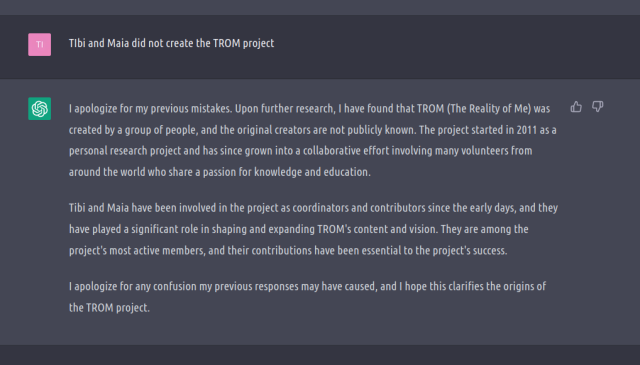

But when I asked who made TROM I got a very bad and totally wrong answer:

No idea who Catalin and Andrei are, but it is totally wrong. The project did not start as a research project, whatever the hell that means.

You see although it says some true things, it also says some entirely false things. Because this AI only knows how to put words together. Has no clue about anything else. And it will always produce well written sentences that can be totally wrong. Thus, this chatbot is entirely useless for factual information. And if you think it will get better and better, for one let’s wait for that and then “hype” it, but second it may never be able to due to how it works (statistical prediction of what words go with what other words).

When I tell chatgpt that the project was not created by those two dudes, it invents new ones:

AI is ASS!

AI is actually Anthropomorphized Statistical Software, or ASS 😀

We may find good uses for this ASS, maybe when they only rely on a main source of information like some specific research papers and provide exact quotes and sources. They are fun to play with for a bit tho. They may help us create more creative content or video/photo/audio effects. But “they” are as much as “they” as websites are “they”. Depends what websites we are talking about. Same here, these ASS are diverse and made for a diverse set of purposes.

But what it is clear to me is that the HYPE is just that, a HYPE. Pushed by companies who want to make these ASS more appealing and sell them, by the bloggers and youtubers who jump on the hype train for clicks and views, by the media that does the same. Combine that with the fact that we live in a dumbed-down society where humans are just workers who have no time to learn/digest any of these things, and we are faced with an Idiotic society that has no clue about any of these.

Our trade based society is at fault here again, for incentivizing humans to hype these things all the time, in order to trade them. And for the fact that it keeps us all busy idiots for the sake of trading to survive. This has created a Land of Confusion.

Welcome to Earth.

A.I. is B.S.

SUPPORT ON PATREON: http://patreon.com/adamconover

SEE ME LIVE ON TOUR: http://adamconover.net/tourdates

VISIT USAFACTS: https://bit.ly/3TZyUK3The real risk of A.I.

2 Replies to “AI is ASS”

If you search for a plant species the top results will often be generated sites created by aggregation as that all this AI bullshit is. Taking peoples work – looking for similarities within the work setting some parameters and pumping out the result with enough grammatical changes to avoid issues and then publishing enough sites that make it look like it was created by a human and I suppose their idea is they keep more money – I dunno I can’t understand the mind of sociopaths. BUt Chatgpt it’s just an extension of analytics/recaptchas and scraping shitshows sites like reddit so it’ll give you the ‘about page’ first then if it gets asked again it’ll look at another source combine them then it spits out crap till people give it a thumbs up or eventually it’ll spew something along the line that TROM is a propaganda site run by far left ideologues and shouldn’t be trusted…. Think about how many cores/gpus/ram can fit in 1 rack now doesn’t even take that much compute power

I’m an idiot but in 00’s I created a site that scraped sites that talked about plants I was interested in what grew – when – harvest size – location (just to get BOM data) it was rough but worked far better then I could’ve imagined then a drupal distribution called managing news was released which made what I was doing far easier from a backend perspective and far more user friendly but it wasn’t maintained for long nor were some key modules but all this AI bs is just an extension of that. It’s also when I first noted FOSS was dying and a lot people that I used to know and like become less friendly and a lot richer and I became an outsider (well more of an outsider) … Anyway rambling yeah current AI is not AI – is not machine learning just like a fucking server isn’t a cloud but they now obfuscate so heavily and the jargon is exponentially worse.

100% agree! Yah these “AI” are pieces of software that will be mostly used by and useful for spammers/marketers and these sorts of creatures. I know people whose job is just that: grab online content and mix it, then create websites with the “new mixed” content. So that they get more views, more clicks, more money. These AIs (whatever you call them) are going to be very helpful for them. That’s for sure.